August 12-17, 2001

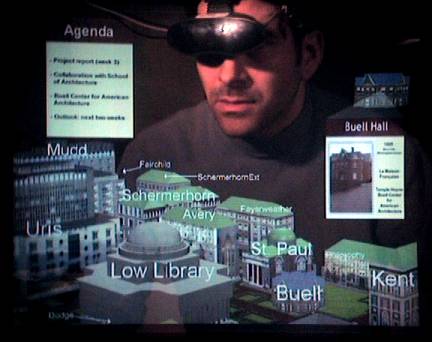

Augmented reality refers to the use of computers to overlay virtual information on the real world. We will demonstrate mobile augmented reality systems (MARS), using see-through head-worn displays with backpack-based computers developed by Columbia University and the Naval Research Laboratory, tracking technology developed by InterSense, and an infrared-transmitter-based ubiquitous information infrastructure from eyeled GmbH. Together, we will create a pervasive 3D information space, integrated with the Emerging Technologies venue, that documents the exhibition itself. We will show some of the user interface techniques that we are developing to present information for MARS, including ones that adapt as the user moves between regions with high-precision, six-degree-of-freedom tracking, orientation tracking and coarse position tracking, and orientation tracking alone.

Attendees will be able to walk around our installation and the area surrounding it, tracked by a six-degree-of-freedom tracker. The information they view will be situated relative to the 3D coordinate system of the exhibition area. For example, an exhibitor's installation may be surrounded by virtual representations of associated material. In other parts of the exhibition area, tracking will be accomplished through a combination of inertial head and body orientation trackers, and a coarse position tracker based on a constellation of infrared transmitters. In those areas, information will be situated relative to the 3D coordinate system of the user's body, but sensitive to the user's coarse position. As users move between areas of the exhibition floor where different tracking technologies are in effect, the user interface will adapt to use the best one available. Our infrared transmitters will also allow attendees to explore parts of the same information space with their own hand-held devices.

The MARS user interfaces that we will present embody three techniques that we are exploring to develop effective augmented reality user interfaces: information filtering, user interface component design, and view management. Information filtering helps select the most relevant information to present, based on data about the user, the tasks being performed, and the surrounding environment, including the user's location. User interface component design determines the format in which this information should be conveyed, based on the available display resources and tracking accuracy. For example, the absence of high accuracy position tracking would favor body- or screen-stabilized components over world-stabilized ones that would need to be registered with the physical objects to which they refer. View management attempts to ensure that the virtual objects that are selected for display are arranged appropriately with regard to their projections on the view plane. For example, those virtual objects that are not constrained to occupy a specific position in the 3D world should be laid out so that they do not obstruct the view to other physical or virtual objects in the scene that are more important. We believe that user interface techniques of this sort will play a key role in the MARS devices that people will begin to use on an everyday basis over the coming decade.